This year's WWDC was going to be the most incredible of all. Not because we said so, but until Tim Cook published moments before the start of what would be the biggest WWDC to date. And it is that WWDC goes. Apple has presented us with a world revolution with the Apple Vision Pro. We tell you everything you need to know about the new Apple glasses.

There was One More Thing. We expected it. Tim came to the fore in the place where the One More Thing are always announced and with that iconic background behind. The Apple Vision were finally a reality. A new AR/VR platform that will forever revolutionize the industry.

We had been among rumors for a long time, the Reality Pro was going to be a reality today and the first of the surprises came with this very thing, the name itself. Gone are the long days of calling them Reality Pro and we will know them forever as Apple Vision Pro. Something similar has happened with the operating system: many records of the xrOS name for nothing. VisionOS will be the final nomenclature of the entire interface (Operating System) that move the Apple Vision Pro.

The interface: an old acquaintance

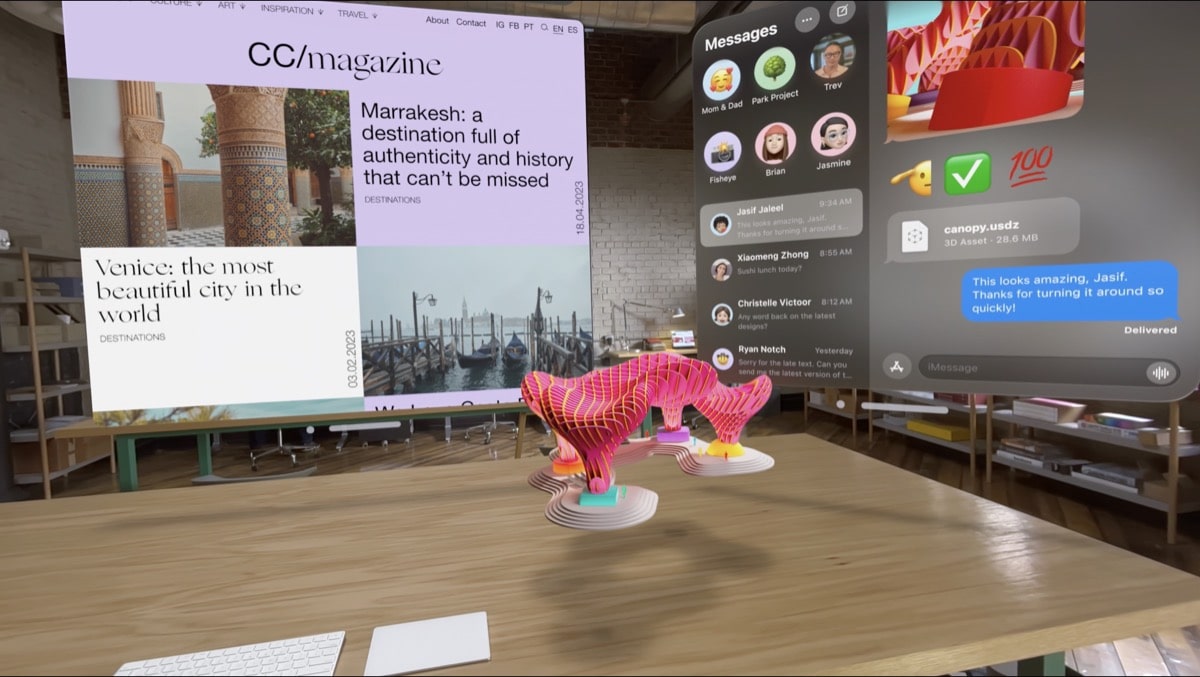

VisionOS is based on an interface for apps and windows, like the ones we know on any iPhone, iPad or Mac. All this, including the iconic icons (and worth the redundancy) that we have already used in other operating systems such as the Photos or Safari app.

VisionOS mimics everything we already know in a new system, in a new interface, so it won't be strange for us to interact with it and we'll know what to do almost without asking. We can resize everything, move it, group it as we want. 3D space is our canvas and we are the creators.

Also, the interface varies with the outside world.. We will have the feeling that it is really physically there. Outside light in the room you're in will affect it, cast shadows, and adapt to the environment to project surround sound so you feel like it's there and sounds at the distance it's displayed. It is simply spectacular.

We will create “Environments”. If we do not want to be and see our room behind (or rather "between") our interface, we can change to another Environment, which is nothing more than changing the room where we are to the field, a beach or whatever Apple can implement. We could be watching a movie in the middle of a mountain and make it look like our screen is a movie or much bigger.

Last but not least, Apple Vision Pro will project the desktop of your Mac. No need for monitors. The Apple Vision Pro is itself a 4K monitor for your resizable Mac. This is incredible. Being able to work with any element of your Mac on the desktop at any size... with the Mac's own accessories. Yes. They are compatible. We can also use our Magic Keyboard and Magic Trackpad to navigate the interface, enter text, etc.

In addition, we will not only interact with what we have on our Mac, since the Apple Vision Pro will sync with iPhone and iCloud so we will have all our documents, info, reminders, notes, contacts, etc. directly in VisionOS.

Revolution in the control of the Vision Pro

Apple's new augmented reality glasses are not as we expected and that is that we will control them not only with gestures and hands as we expected, but with a joint system of sight, gestures and voice.

The Apple Reality Pro will understand where we are looking with the analysis of our retina to select that part of the interface that we want to "touch" or with which we want to interact. From there, we will confirm the action with hand gestures that we already know (pinch for example) and other new ones to move the interface throughout the 3D environment that we have in front of us. Because yes, we will interact with the 3D interface, it will not be a kind of virtual flat monitor and that's it. No.

On the other hand, we will use our voice to navigate and interact with the interface. For example, we will enter a website in Safari with our own voice (as long as we don't want to do it with a Magic Keyboard, for example).

As Apple pointed out, we went from iPhone Multitouch, which was a real revolution, to new interaction system for Apple Vision Pro. The re-revolution.

EyeSight Technology

The first image of the Apple Vision Pro placed on a person, showed us a girl from the front, looking at us and where we can see her eyes perfectly despite wearing virtual and augmented reality glasses. This is thanks to the technology that Apple has called EyeSight.

With EyeSight, Apple Vision Pro will sense when people are around and project your eyes onto an external screen, so that it will give that feeling of being transparent. In addition, with the retina analysis that they incorporate and the detection of your gaze, it will be easy for the external person to identify if they are talking to them, since it will be as if we were not wearing anything. She will see that you look at her, that you interact with her.

Apple Vision Pro doesn't want you to isolate yourself in another world. They want you to be part of this world, to combine it, to remain humanized and that the interaction is not lost.. That's EyeSight.

from FaceID to OpticID

The Apple Vision Pro will use a new biometric recognition system that Apple has called OpticID. This is based on the detection and identification of your retina. Just as secure as FaceID and supported by technology that detects our gaze to navigate the interface. We will continue to be safer than ever using and leaving our Apple Vision Pro.

Technical part: How do you make it possible?

At the hardware level, we will find already known elements. We will have the buttons that the AirPods Max have including the Digital Crown so physically interacting with the Vision Pro will not be anything new (and this is good). It will be intuitive and easy to learn.

The design is different from what was leaked in the renders, with Apple finishes in aluminum and an external screen that will project our own face as we have commented.

Regarding the internal part, it equips two internal microLED screens >4K with a pixel density of 64/1 compared to the iPhone so that our eye (or rather each of our eyes) does not notice the slightest thing that we are in front of a screen. 23 million pixels between the two panels. Enough, right?

We have 12 cameras that show what we have around us by capturing a 360º field. They equip nothing more and nothing less than 5 LiDAR sensors responsible for locating objects and identifying our gestures to move through the interface together with 6 microphones to capture sounds from anywhere around us. Not without it, reproduce the sound in spatial format adapting it to the room where we are in the purest HomePod style.

More hardware? of course. The Apple Vision Pro come with an M2 to process everything together with a new R1 chip that Apple has had to design to manage the huge amount of data in real time that Apple Reality Pro captures by all its sensors. This new R1 chip is designed to reduce latency among all the data captured by all these sensors and achieve an infinitely more fluid experience.

Of course, everything has a "bad" side. And in this case it is autonomy. 2 hours we can enjoy our Apple Vision Pro with the battery to powerbank or flask mode which includes with a cable. However, it can be easily replaced or can be connected directly to the current.

It seems that some of the rumors were pointing well to the final design of the Vision Pro, but they HAVE FALLEN SHORT of this WORK OF ART AND ENGINEERING.

Availability and price

The Apple Vision Pro have needed more than 5000 patents as they have confirmed in the filing. And what we expected has also come true: the price will start at 3.499 dollars, an exclusive device which will arrive early next year first in the United States.

However, It is unknown at the moment how many storage modalities it will have or if there will only be a single model. All the Apple Vision Pro have exceeded ALL expectations and they have surprised the world with everything that those from Cupertino have been able to present at WWDC23.

Welcome to the legacy of Tim Cook. Welcome to the worldwide revolution of the Apple Vision Pro.